PDF) Incorporating representation learning and multihead attention

Por um escritor misterioso

Descrição

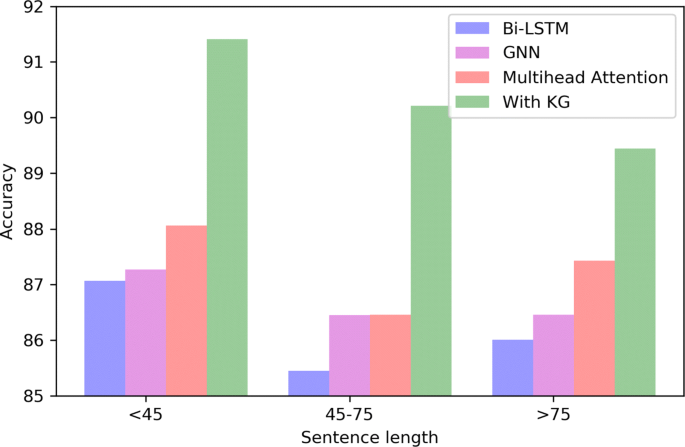

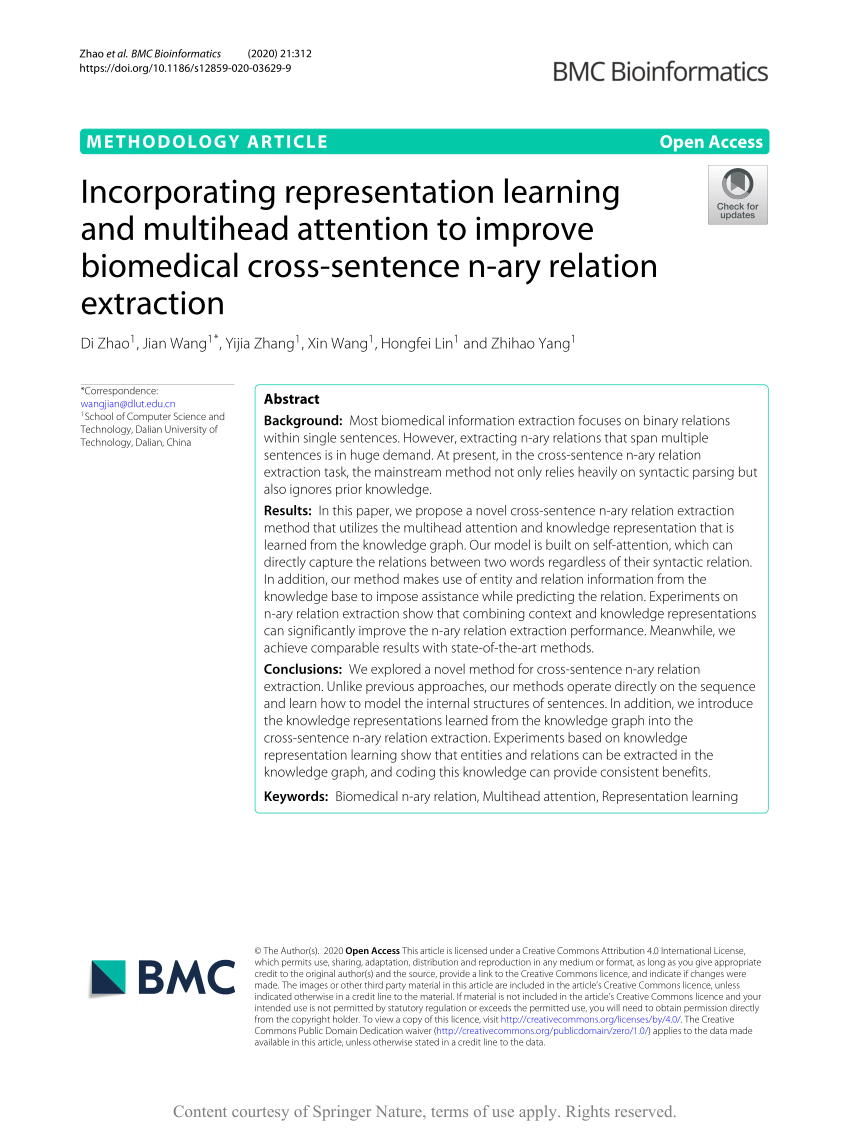

Incorporating representation learning and multihead attention to improve biomedical cross-sentence n-ary relation extraction, BMC Bioinformatics

PDF] Dependency-Based Self-Attention for Transformer NMT

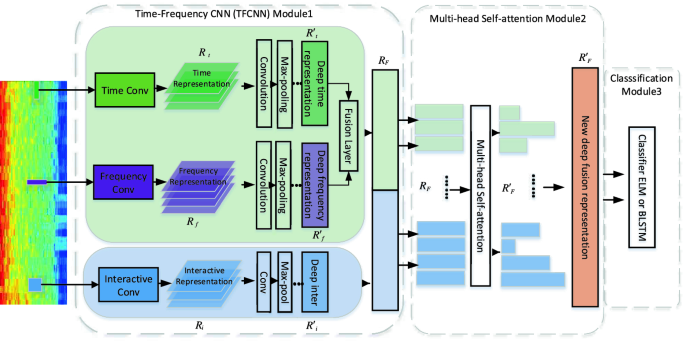

Time-Frequency Deep Representation Learning for Speech Emotion Recognition Integrating Self-attention

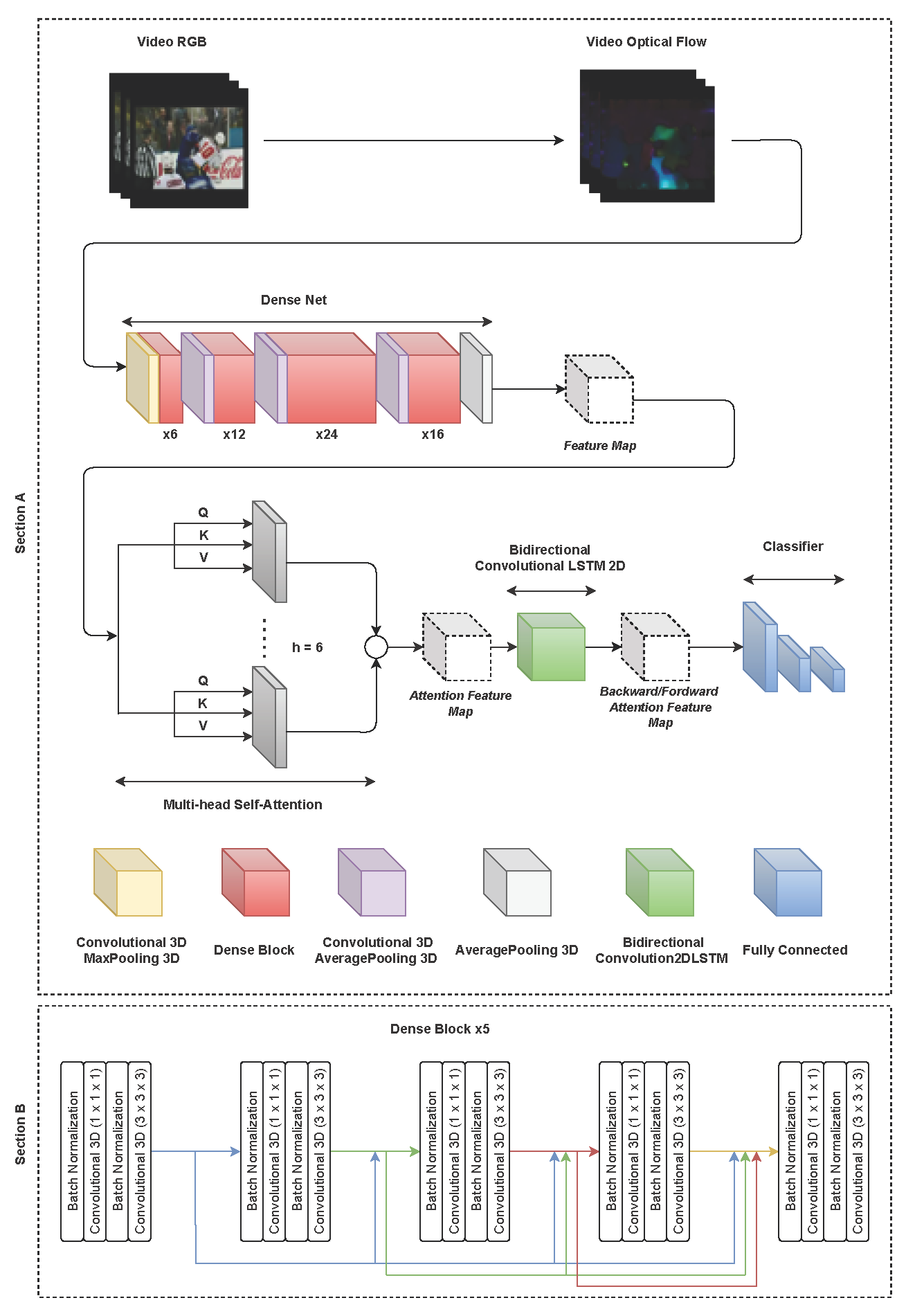

Pipeline of the multihead enhanced attention mechanism. (a) shows the

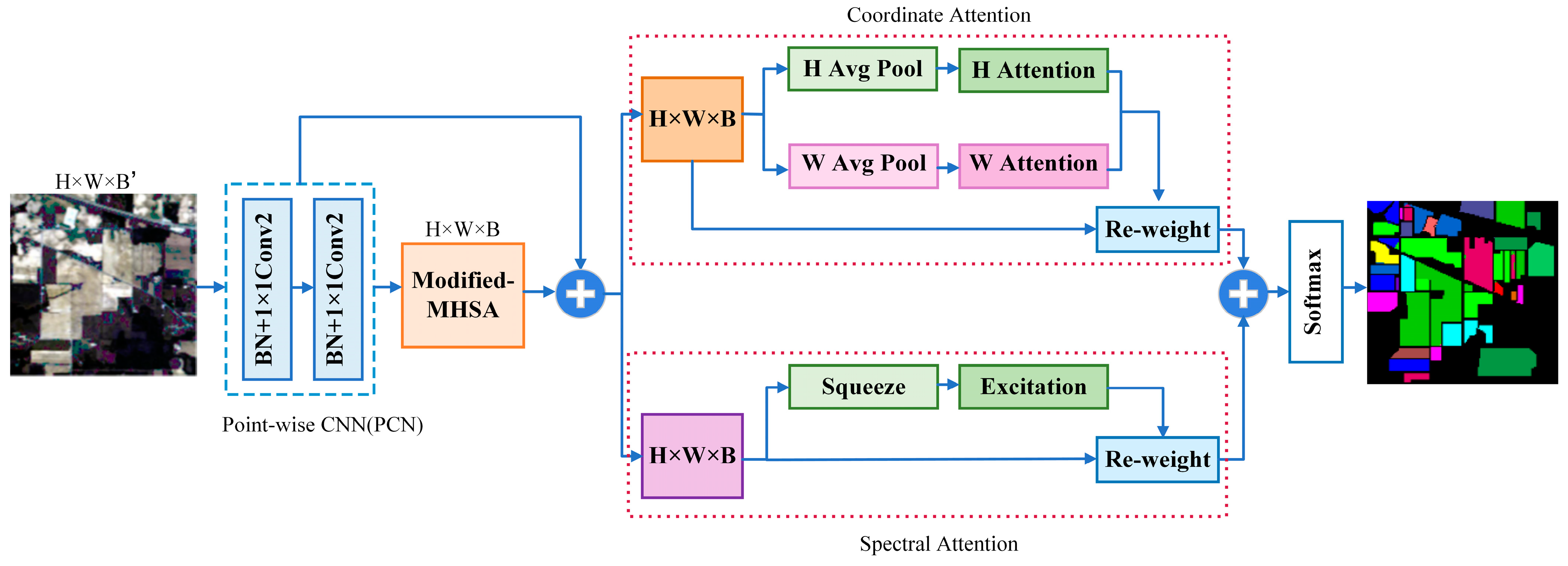

J. Imaging, Free Full-Text

PDF] Incorporating VAD into ASR System by Multi-task Learning

PDF] Interpretable Multi-Head Self-Attention Architecture for Sarcasm Detection in Social Media

Electronics, Free Full-Text

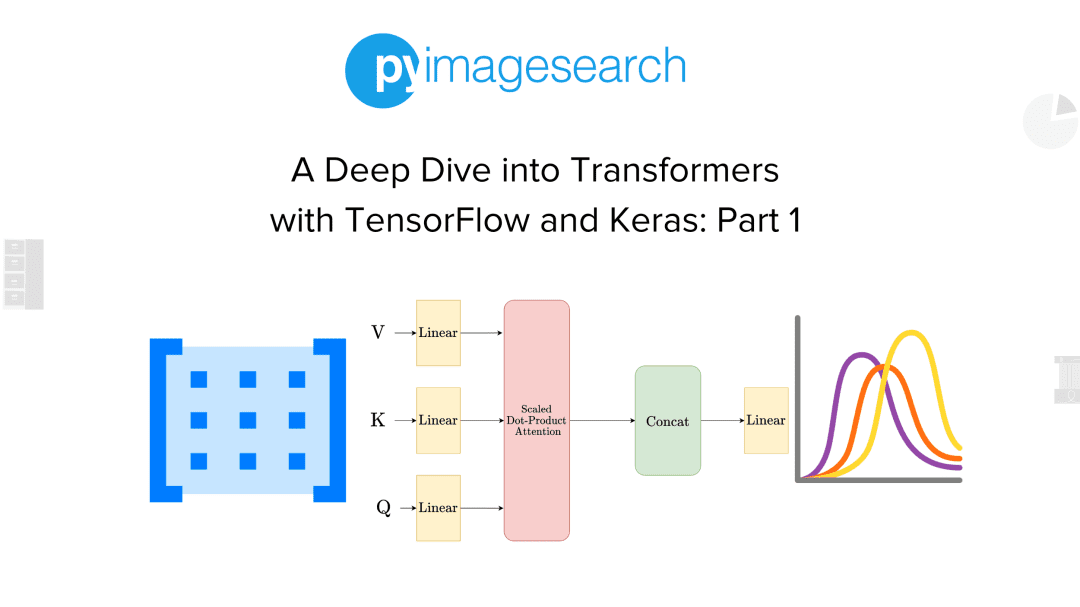

A Deep Dive into Transformers with TensorFlow and Keras: Part 1 - PyImageSearch

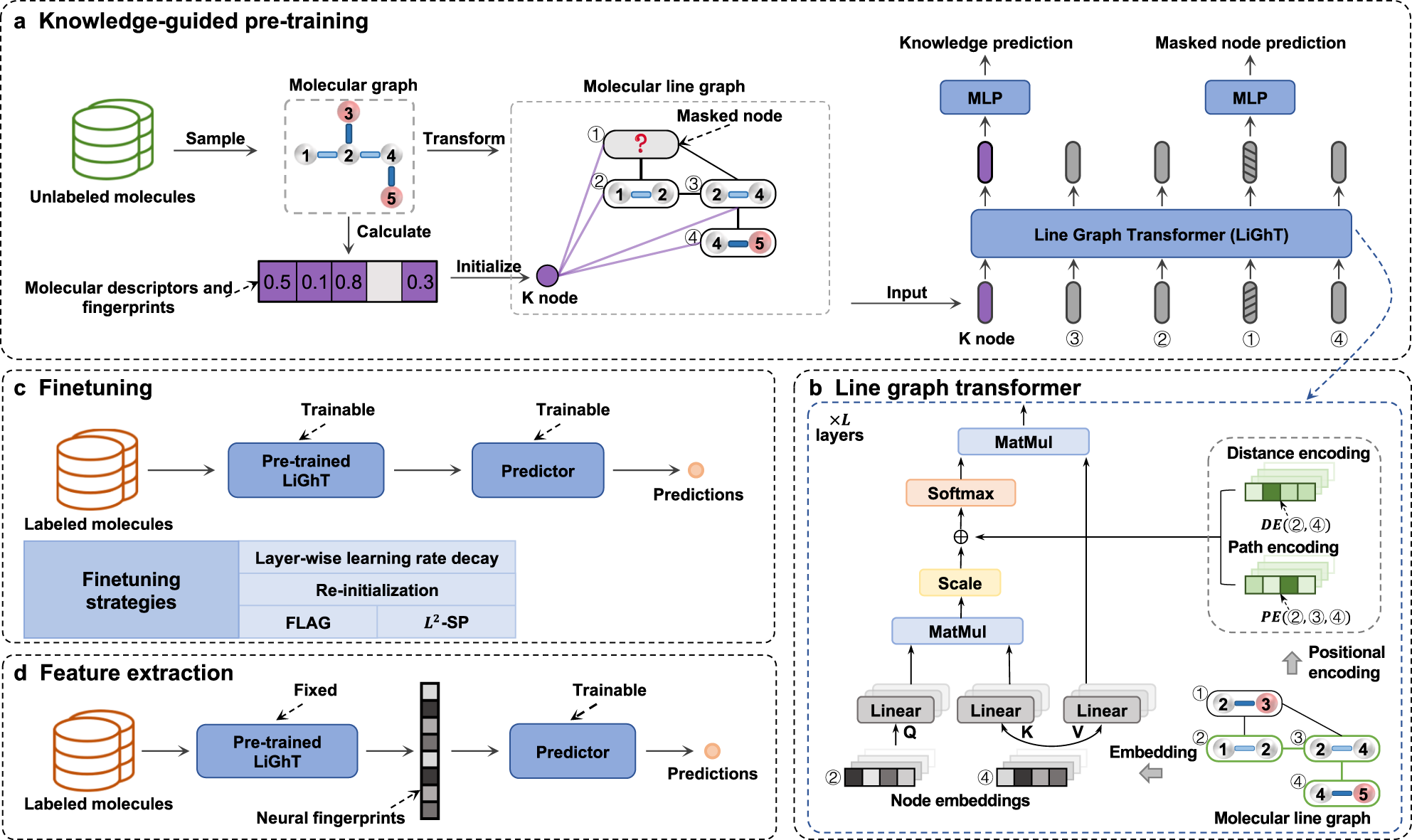

A knowledge-guided pre-training framework for improving molecular representation learning

PDF) Incorporating representation learning and multihead attention to improve biomedical cross-sentence n-ary relation extraction

How to Implement Multi-Head Attention from Scratch in TensorFlow and Keras

de

por adulto (o preço varia de acordo com o tamanho do grupo)

.jpg)